When the CEO Becomes the Chief AI Officer: A Framework for Measuring What Matters

Key Takeaways

75% of CEOs now own AI decisions — but 74% want AI revenue while only 20% get it. The gap isn’t technology. It’s measurement. Most companies track adoption. The winners track impact.

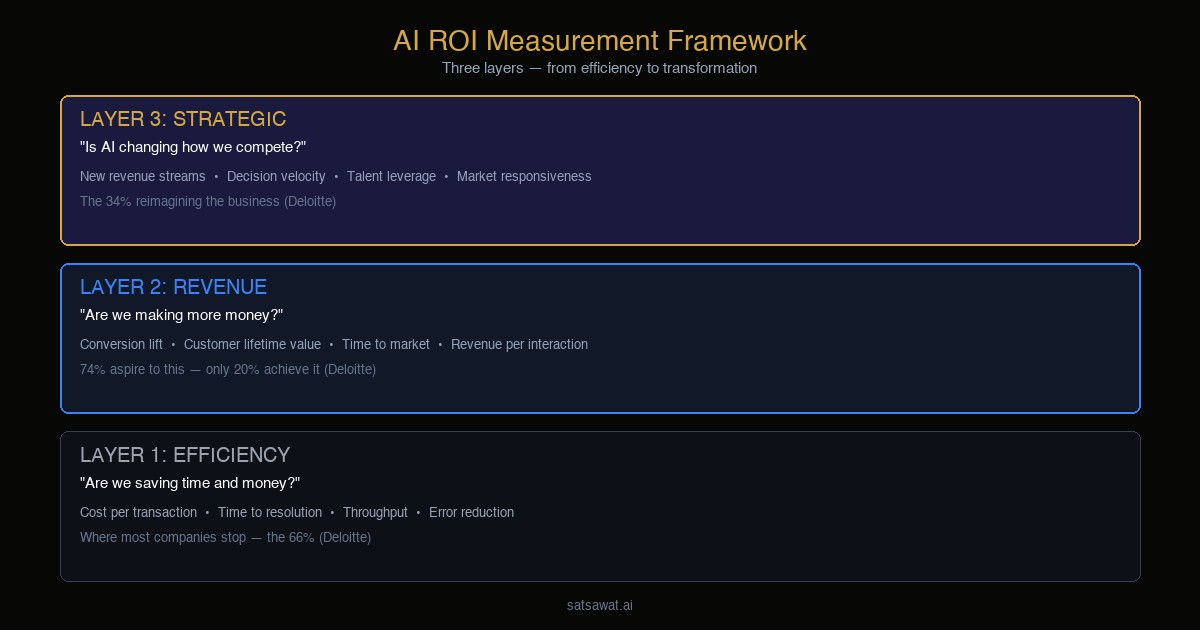

Three layers of AI ROI: Layer 1 (efficiency) tells you if AI works. Layer 2 (revenue) tells you if AI pays. Layer 3 (strategic) tells you if AI transforms. Most companies are stuck on Layer 1.

My take: If your AI dashboard doesn’t have a dollar sign on it, you’re in the 74% that’s hoping, not the 20% that’s delivering. The CEO who asks “how fast can we deploy?” loses to the one who asks “how do we measure real impact?”

The CEO Took the Wheel. Now What?

Happy Songkran to everyone celebrating the Thai New Year! During the break, I caught up with a few close friends who are CEOs of leading Thai companies. The conversation kept circling back to the same thing: they’re not hiring Chief AI Officers anymore. They’re doing it themselves. One told me, “If I delegate AI to someone else, I’m delegating the future of this company.”

They’re not alone. BCG’s AI Radar 2026 — surveying 2,360 executives including 640 CEOs across 16 markets — found that nearly three quarters of CEOs now say they are their organization’s main AI decision maker. Double last year. Half believe their job is on the line if AI doesn’t deliver. (BCG, Jan 2026)

But here’s the disconnect. Deloitte surveyed 3,235 leaders across 24 countries: 66% report productivity gains from AI. Yet only 20% have actually increased revenue — despite 74% aspiring to. That gap should alarm every CEO who just doubled their AI budget.

The CEO owns the decision. The board wants the return. And most companies are measuring the wrong things to connect the two.

The Measurement Problem

I see this a lot in the field. The AI team reports adoption metrics: “We have 5,000 monthly active users on our AI assistant.” “Our agent handles 80% of tier-1 tickets.” “Model accuracy is 92%.”

But the management team asks: “So what? What’s the revenue impact? How much did we save? Are customers staying longer?”

Silence.

The gap between what AI teams measure and what the management team cares about is where most AI ROI stories die. McKinsey found the same pattern — the biggest barrier to scaling AI isn’t the technology or the employees. It’s leaders who aren’t steering fast enough toward business outcomes.

A Framework for Measuring AI ROI

After working with enterprises across banking, telecom, government, and retail for more than 10 years, I’ve landed on a framework that bridges the gap between what AI teams build and what boards need to see. Three layers — each one answers a different question.

Layer 1: Efficiency Metrics — “Are we saving time and money?”

This is where most companies start, and it’s valid. But it’s the floor, not the ceiling.

| Metric | What It Tells You | Example |

|---|---|---|

| Cost per transaction | Is AI cheaper than the manual process? | Agent handles support ticket for $0.12 vs $4.50 human cost |

| Time to resolution | Is AI faster? | Average resolution dropped from 24 hours to 8 minutes |

| Throughput | Can we handle more volume? | Processing 10x more applications with the same team |

| Error reduction | Is AI more accurate than the manual process? | Document processing errors dropped from 12% to 2% |

If you can’t show savings here, you have a technology problem. Most companies can. This is the 66% that Deloitte found reporting productivity gains — and it shows AI working hand-in-hand with your team.

Layer 2: Revenue Metrics — “Are we making more money?”

This is where the 74/20 gap lives. Most companies never get here because they never designed their AI to impact revenue in the first place.

| Metric | What It Tells You | Example |

|---|---|---|

| Conversion lift | Is AI helping close more deals? | AI-assisted recommendations increased conversion by 15% |

| Customer lifetime value | Are AI-served customers worth more? | Customers using AI features have 23% higher retention |

| Time to market | Is AI accelerating product delivery? | New product analysis that took 3 weeks now takes 2 days |

| Revenue per AI-assisted interaction | What’s each AI touchpoint worth? | Each AI-routed upsell generates $8.40 average revenue |

If your AI dashboard doesn’t have a revenue metric, you’re optimizing costs — not generating value. That’s the difference between the 66% and the 20%.

Here’s an example: I worked with a retail banking client who had an AI-powered chatbot handling 70% of customer inquiries — a clear Layer 1 win. But when we looked at the data, customers who interacted with the chatbot were actually churning faster because unresolved issues were being marked as “handled.” We redesigned the measurement: instead of tracking ticket deflection, we tracked resolution satisfaction and downstream product uptake. Within two quarters, the same chatbot — with better logic — was driving an 8% increase in cross-sell conversion. The AI didn’t change. The measurement did.

Layer 3: Strategic Metrics — “Is AI changing how we compete?”

This is where the 34% that Deloitte calls “truly reimagining the business” operate. These metrics are harder to measure but they’re what separates AI as a tool from AI as a competitive advantage.

| Metric | What It Tells You | Example |

|---|---|---|

| New revenue streams | Has AI created business that didn’t exist before? | AI-powered advisory service generating $2M ARR |

| Decision velocity | Are leaders making better decisions faster? | Board-ready market analysis in hours instead of weeks |

| Talent leverage | Are your best people doing higher-value work? | Senior analysts shifted from data prep to strategy |

| Market responsiveness | Can you react faster than competitors? | Pricing adjustments that took days now happen in real-time |

One of the most striking examples I’ve seen was a government agency that started with AI for document processing (Layer 1 — efficiency). Over 18 months, they evolved it into a citizen insights platform that could predict service demand by district weeks in advance. That capability didn’t exist before AI — it became a new strategic asset that other agencies wanted access to. The original business case was “process forms faster.” The actual outcome was “redesign how government allocates resources.” That’s the jump from Layer 1 to Layer 3 — and it only happened because someone asked “what else can this data tell us?” after the efficiency win was proven.

The Bottom Line

The CEO becoming the Chief AI Officer is the right shift. But owning the decision means owning the measurement. Tracking adoption may sound good, but you’re flying blind. And if you’re only tracking efficiency, you’re leaving revenue on the table.

Layer 1 tells you if AI works. Layer 2 tells you if AI pays. Layer 3 tells you if AI transforms. Most companies are stuck on Layer 1. The 20% that generate revenue have all three.

Read more: BCG AI Radar 2026, BCG Press Release, Deloitte — State of AI 2026, McKinsey — State of AI